æroflux – AAAA

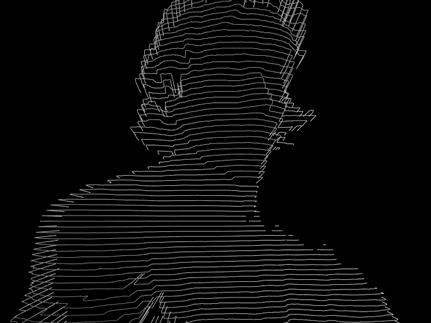

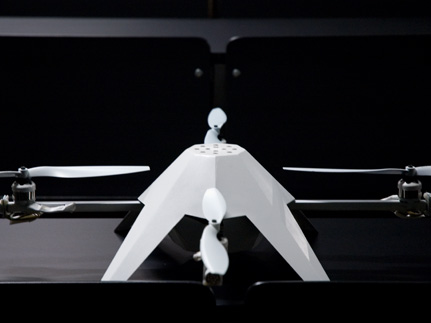

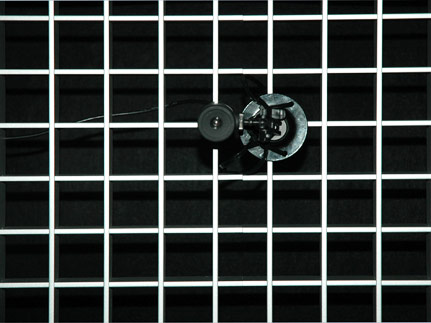

æroflux consists of an athropod (currently a cockroach), placed in a petri dish on top of an aerial vehicle. The insect steers the vehicle with its movements and its position, which are determined by fotoresistors positioned beneath the petri dish. The aerial vehicle is based on a modified

Quadrotor, cocooned in a hull of fiberglass. Collisions are avoided with the help of ultrasonic sensors. The project shows the direct opposite of current

DARPA-sponsored research in the field of

cyborg beetles, where man controls the animal's flight through an implanted microelectronic system, whereas here the beetle controls the machine's flight.

AAAA stands for

Autonomous Arthropod Aerial Appliance.

About

About About

About